AI Data Centers Are Scaling Faster Than the Grid

Artificial intelligence is driving one of the fastest expansions the data center industry has ever seen. From large language model training to enterprise AI deployment, organizations across the world are investing heavily in new compute infrastructure. The result is a rapid rise in demand for specialized AI data centers, often referred to as AIDC.

But as the industry scales, a new constraint is becoming increasingly visible. In many regions, AI data centers are being built faster than the electrical grid can expand. Power infrastructure — not compute hardware — is quickly becoming one of the most important variables in AI infrastructure planning.

The Growing Demand for AI Compute Infrastructure

AI workloads require an enormous amount of computing power. Modern training clusters rely on thousands of accelerators working together across high-bandwidth networks, running continuously for extended periods of time.

This level of computing density translates directly into energy demand. Compared to traditional enterprise IT environments, AI data centers typically operate with significantly higher power consumption and more intensive infrastructure requirements.

As AI adoption expands across industries — including technology, finance, healthcare, and research — the number of organizations deploying dedicated AI infrastructure continues to grow.

AI Data Centers Are Expanding Rapidly

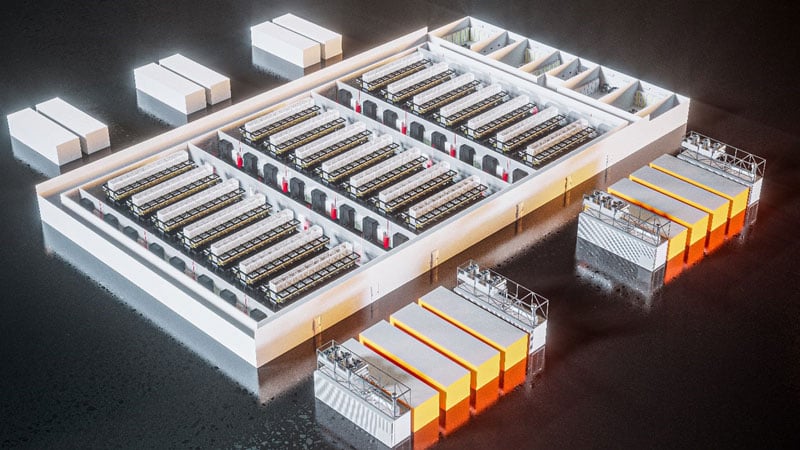

Major cloud providers and technology companies are investing billions of dollars into next-generation AI infrastructure. New facilities are being built specifically to host large-scale AI clusters and support high-performance computing environments.

Across North America, Europe, and Asia, data center development pipelines have accelerated dramatically in recent years. Many of these new projects are designed from the ground up as AI data centers rather than traditional enterprise facilities.

However, the speed of this expansion is beginning to expose an underlying challenge: access to sufficient electrical capacity.

Power Infrastructure Is Becoming the Bottleneck

Building a data center involves far more than constructing a physical facility. Reliable access to electricity is essential, and large-scale AI infrastructure requires significant power capacity.

Expanding electrical infrastructure typically involves upgrades such as new substations, additional transmission lines, and grid capacity planning. These projects often require long permitting processes, regulatory approvals, and multi-year construction timelines.

By contrast, data center facilities themselves can often be completed much faster. This mismatch in timelines means that power infrastructure can become a limiting factor for AI data center deployment.

Grid Constraints Are Already Emerging

In several established data center markets, developers are encountering delays related to grid capacity. When multiple high-power facilities are planned within the same region, local electrical networks may require upgrades before additional load can be supported.

As a result, power availability is increasingly being evaluated during the earliest stages of AI data center planning. Developers must work closely with utilities and regional infrastructure planners to ensure that long-term energy demand can be met.

In some cases, access to power has become one of the key factors determining where new AI infrastructure can be built.

Why Flexible Infrastructure Deployment Matters

The rapid growth of AI workloads means that infrastructure planning must be both scalable and adaptable. Rather than committing to massive capacity from the start, many organizations are exploring more flexible approaches to data center expansion.

Modular infrastructure strategies can allow capacity to grow incrementally as additional power resources become available. This phased approach helps align AI compute expansion with the realities of regional power infrastructure.

For organizations deploying AIDC environments, flexibility in how infrastructure is deployed can significantly reduce risk while maintaining the ability to scale.

The Infrastructure Race Behind AI

Artificial intelligence is often discussed in terms of models, software, and algorithms. Yet behind every breakthrough is a physical infrastructure layer — data centers, power systems, and global networks that make large-scale computing possible.

Companies that can deploy AI infrastructure quickly and reliably gain a meaningful advantage. Faster access to compute resources enables faster model development, faster product launches, and ultimately faster innovation.

This is why AI infrastructure strategy is rapidly becoming a central focus for technology organizations worldwide.

The Bottom Line

AI is accelerating the growth of global compute demand at an unprecedented pace. As new AI data centers continue to come online, the supporting power infrastructure must evolve just as quickly.

In many regions, the expansion of AIDC facilities is already moving faster than the electrical grid can adapt. Addressing this gap between compute growth and power infrastructure will be one of the defining challenges for the data center industry in the years ahead.

For organizations building the next generation of AI infrastructure, understanding the relationship between compute scale and power availability will be critical to long-term success.